AI Writes Fast. You Ship Bugs.

I let AI write a feature. The tests passed. I shipped it.

Then a user found a bug the test suite never covered, because I never thought to test for it. The gap in AI coding workflows isn't that AI writes bad code. It's that we treat it like a typewriter: we ask it to write, but never to verify.

A few weeks ago, I watched Aaron Francis's course on AI and Claude Code. Two commands changed how I work. They plug the holes AI workflows always leave open.

The first is new-tests. After any feature or bug fix, it audits your codebase for missing tests: edge cases, uncovered jobs, paths you forgot to exercise.

The second is finalize, which you run before committing. It tightens the loose ends AI leaves behind: unused imports, sloppy naming, half-finished cleanup.

Together, they take a diff from "looks fine" to "I'm confident shipping this." I've adapted both for OpenCode, the agent I use daily.

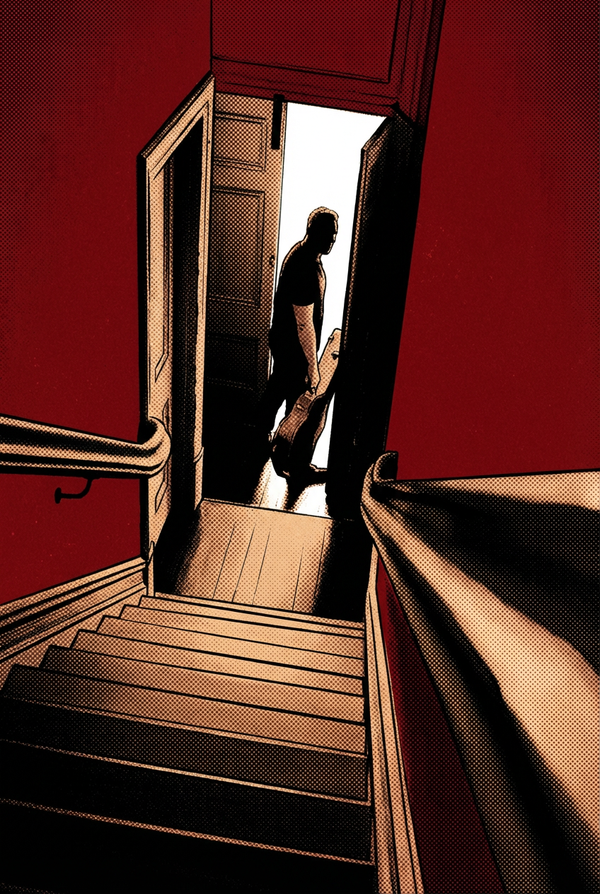

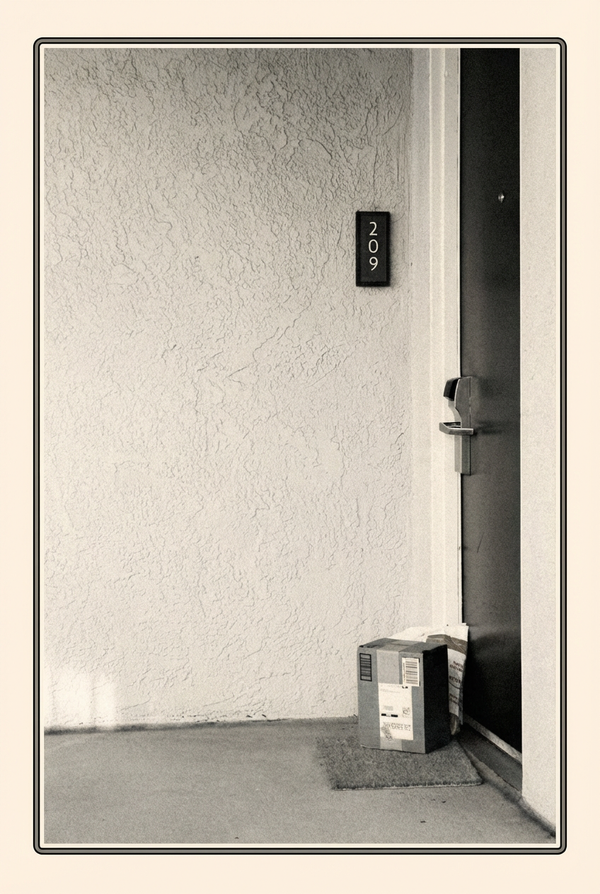

Here's the kind of thing that happens without them: a user could view someone else's private content by changing the resource ID in the URL. During implementation, both the AI and I forgot to add an authorization check. The code worked. The tests passed. And neither of us noticed the door was unlocked.

When I write code myself, I think while I type. The slow pace gives me room to notice gaps. AI compresses hours of coding into seconds. You get the code faster, but you lose the thinking time: the minutes where your mind wanders and spots the thing you almost missed.

Sometimes AI catches gaps on its own. Often it doesn't. Speed papers over holes. Everything looks solid until someone leans on it.

Before these commands, my workflow was: describe the feature, review the output, steer when it goes wrong, add some tests, manually verify, ship. It worked most of the time.

But the tests AI wrote only covered the happy path. Edge cases, error handling, the things that break in production. I'd discover those later. Usually when a user reported them.

These commands add structure: make it work, find what you haven't tested, clean up after yourself. Instead of asking AI to write and ship, you ask it to review its own work.

AI loves writing code. It doesn't love reviewing what it left behind. finalize forces it to confront its own mess: debugging code from failed test runs, breadcrumbs from abandoned approaches when I steered mid-session. It shrinks the code before it hits the repository.

You still move fast.

Here's what each one does:

new-tests reviews the conversation to understand what changed: what feature was built, what files were modified, what behavior was introduced. It checks the current diff. Then it hunts for meaningful gaps: untested methods, unhandled errors, uncovered code paths.

It's surgical, not exhaustive. It skips getters, setters, framework code, and anything already well-tested. It finds related test files, writes tests for each gap following project conventions, and verifies everything passes.

finalize reviews the conversation to understand the full arc of the session: what was built, what approaches were tried and abandoned, what files changed. Then it goes digging: false starts, duplicated logic, naming inconsistencies, over-engineering.

For each file, it removes dead code, consolidates, simplifies, and keeps things consistent. It runs the test suite to verify nothing broke. Think of it as an archaeological dig through the AI's session, brushing dirt off everything it tried and left behind.

To get started, drop the command files in your agent's commands folder. For OpenCode, that's ~/.config/opencode/commands/. Aaron's commands were written for Claude Code. I asked AI to adapt them for OpenCode, and you can do the same for any agent. Find your agent's docs on custom commands, or ask your coding agent to import Claude Code commands for you.

The exact prompts come from Aaron's paid course, so I can't share them. But the pattern is what matters, not the specific prompts. Start with the pattern described above, then adapt it to how you review code. Everyone's process is different. Your commands should reflect your thinking.

AI tends to make codebases swell. Left alone, small cracks accumulate. You see issues but push through, because there's always another feature to build. Eventually you face a choice: abandon the project or audit an overwhelming codebase. The alternative is keeping things clean as you go, catching cracks before they compound.

As AI gets faster and more capable, this pattern matters more. Speed without verification isn't progress. It's just technical debt arriving faster.

AI writes code faster than we can think about it. These two commands give you back the thinking time speed took away.

If you want to try this, Aaron Francis's course at faster.dev covers the original commands. Adapt them to your agent, your workflow, your thinking. The pattern is simple: verify what you built, then clean up after yourself.